| Acronym | GESTINTERACT |

|---|---|

| Name | Gesture Interpretation for the Analysis of Interactions Humans/Robots/Humans |

| Funding Reference | FCT - POSI/EEA-SRI/61911/2004 |

| Dates | 2005-09|2008-08 |

| Summary | When engaged in collaborative activities, people express their opinions, intents and wishes by speaking to each other using intonation, facial expressions and gestures. They move in physical space and manipulate objects. These actions are inherently linked to the individual’s cognitive perception. They have a meaning and a purpose. They are adapted to both the environmental and social setting. In this project methods and techniques for the interpretation of human gestures will be developed using computer vision so that the analysis of the interaction and communication between humans and man-machine can be performed. The interaction will take place indoors. The space where the interaction occurs will be covered by a network of cameras. The techniques to be developed shall allow for the machine/robot to interpret human gestures from those with whom it interacts but also the interpretation of the interaction among humans using gestures and body posture. |

| Research Groups |

Computer and Robot Vision Lab (VisLab) |

| Project Partners | ISR Coimbra (P) |

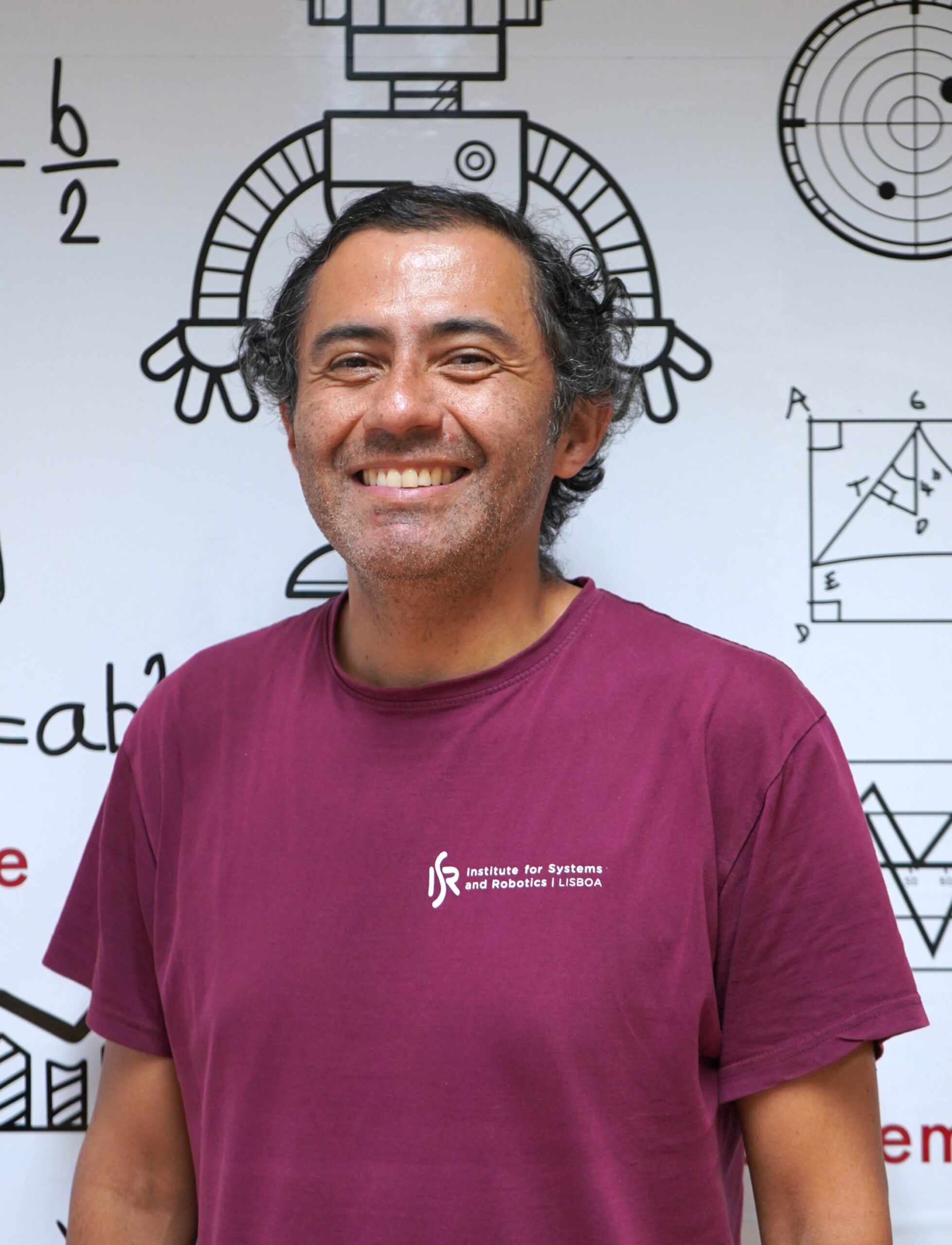

| ISR/IST Responsible | |

| People |